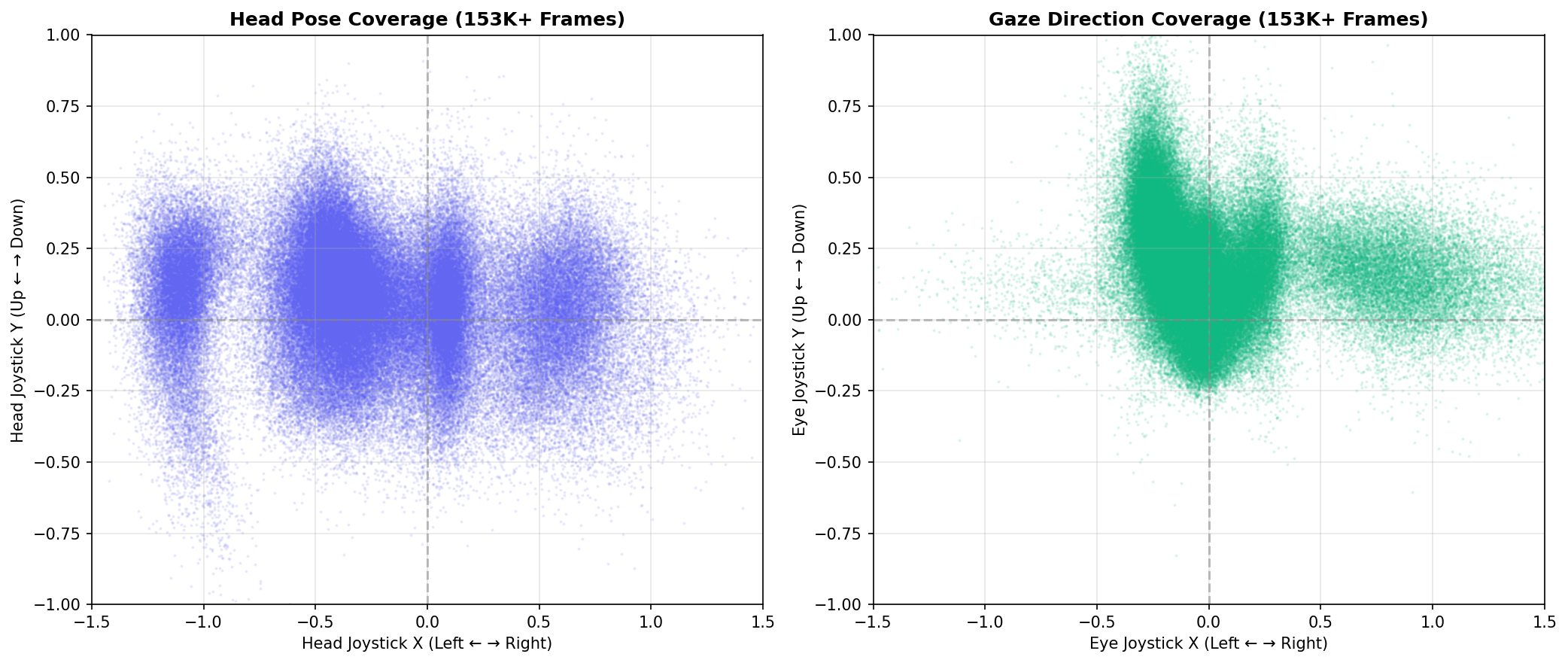

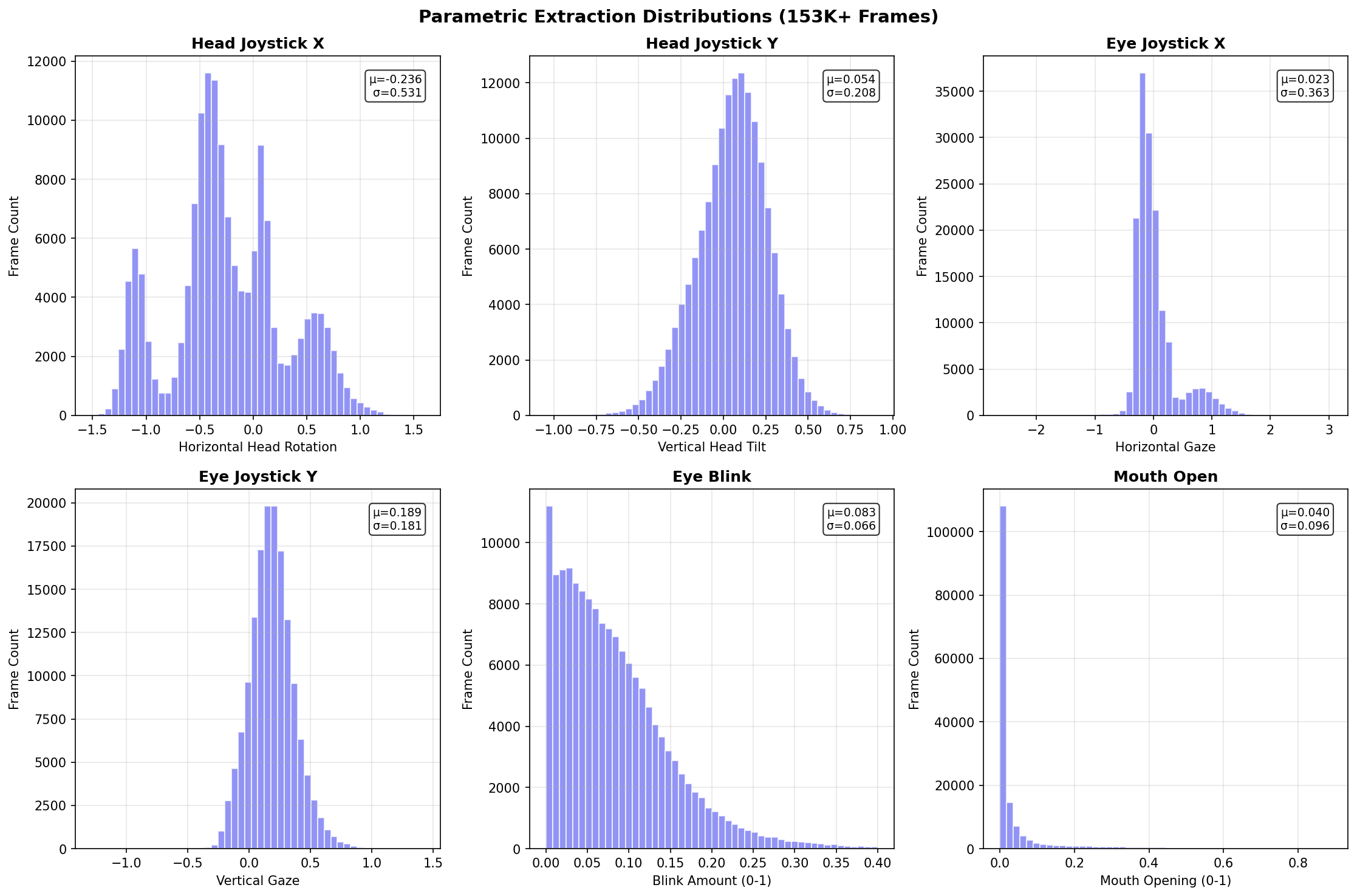

Parameter Distribution Analysis

Statistical breakdown of all extracted parameters across our 153K+ frame dataset.

Distribution Histograms

Each parameter shows healthy distribution across its range. Head rotation (jx/jy) and gaze direction (eye_jx/jy) span full control surfaces. Expression parameters (blink, mouth) cluster near neutral with tails for extreme poses.

| Parameter | Count | Mean | Std Dev | P5 | P50 | P95 |

|---|---|---|---|---|---|---|

| head_jx | 153,451 | -0.236 | 0.531 | -1.137 | -0.300 | 0.692 |

| head_jy | 153,451 | 0.054 | 0.208 | -0.308 | 0.067 | 0.374 |

| eye_jx | 153,451 | 0.023 | 0.363 | -0.313 | -0.072 | 0.886 |

| eye_jy | 153,451 | 0.189 | 0.181 | -0.101 | 0.183 | 0.500 |

| eye_blink | 153,451 | 0.083 | 0.066 | 0.005 | 0.070 | 0.210 |

| mouth | 153,451 | 0.040 | 0.096 | 0.000 | 0.005 | 0.247 |

| mouth_expr | 153,451 | 0.030 | 0.134 | -0.007 | 0.000 | 0.214 |